The Enterprise Governance Infrastructure to Scale AI Agents

Every enterprise already knows how to manage a growing workforce. At a certain scale, informal management gives way to institutional infrastructure, giving the organization the structure, accountability, and continuous development capability to scale with confidence.

AI agents are augmenting human teams in growing numbers across every business process and every function. That same inflection point has arrived for enterprise agent portfolios. This is a look at the governance architecture operational backbones require.

The Strategic Moment for Enterprises

The pace of AI agent deployment in large organizations has crossed a major threshold. What began as controlled pilots in isolated business functions has become a distributed portfolio of production systems, each interacting with real customers, sensitive data, and complex business processes. The gains are tangible: sales operations have multiplied their previous productivity; customer service functions are delivering more consistent experiences across geographies; financial operations that depended on manual analysis are returning answers in seconds, just to name a few. Agent portfolios in every business function are expanding.

Every organization that has scaled a human workforce has faced the same inflection point. The moment when informal management reaches its limits and deliberate governance infrastructure is needed for further growth. This is not about treating agents as employees. It's about recognizing that the same organizational discipline and platforms required to scale the human workforce is now required to scale the agent portfolio. As agent portfolios grow in scale, scope, complexity, and business criticality, that same inflection point has arrived. The organizations that recognize it early will be the ones that scale furthest.

Consider a financial services organization running an AI agent across customer invoice interactions like handling overcharge disputes, line-item explanation requests, address changes, and payment arrangements. A closer look at one month of production operations reveals the fuller picture that any scaling operation needs: which interactions resolved cleanly, which escalated to human agents, where responses had drifted from current business policy, and where residual risk had quietly accumulated. Each dimension pointed directly to a specific improvement opportunity.

That level of operational insight is routine for any well-run human workforce. For most agent portfolios, it is still out of reach. The reason: this insight rarely resides in the agents themselves.

It lives at a level above the agent and human orchestration layer, where governance infrastructure sees across agents, human handoffs, tool calls, and business outcomes. Individual agentic level observability is the first step, but the full governance signal only emerges above the orchestration layer, where the full picture becomes visible. It is here that governance infrastructure turns agent activity into organizational intelligence.

Five Infrastructure Capabilities for ScalingAgents

Today's enterprise agents are not LLM wrappers answering questions. They are autonomous reasoning systems that plan multi-step workflows, make sequential decisions, invoke tools, query databases, call APIs, and coordinate with other agents, all while working alongside human teams in a continuous, fluid interplay. It is precisely that interplay of agents: handing off to humans, humans directing agents, decisions shared across both, that makes enterprise-scale agent operations fundamentally more complex to govern than anything that came before. The monitoring and evaluation approaches designed for simpler systems were not built for this dynamic, and the gap shows as portfolios scale.

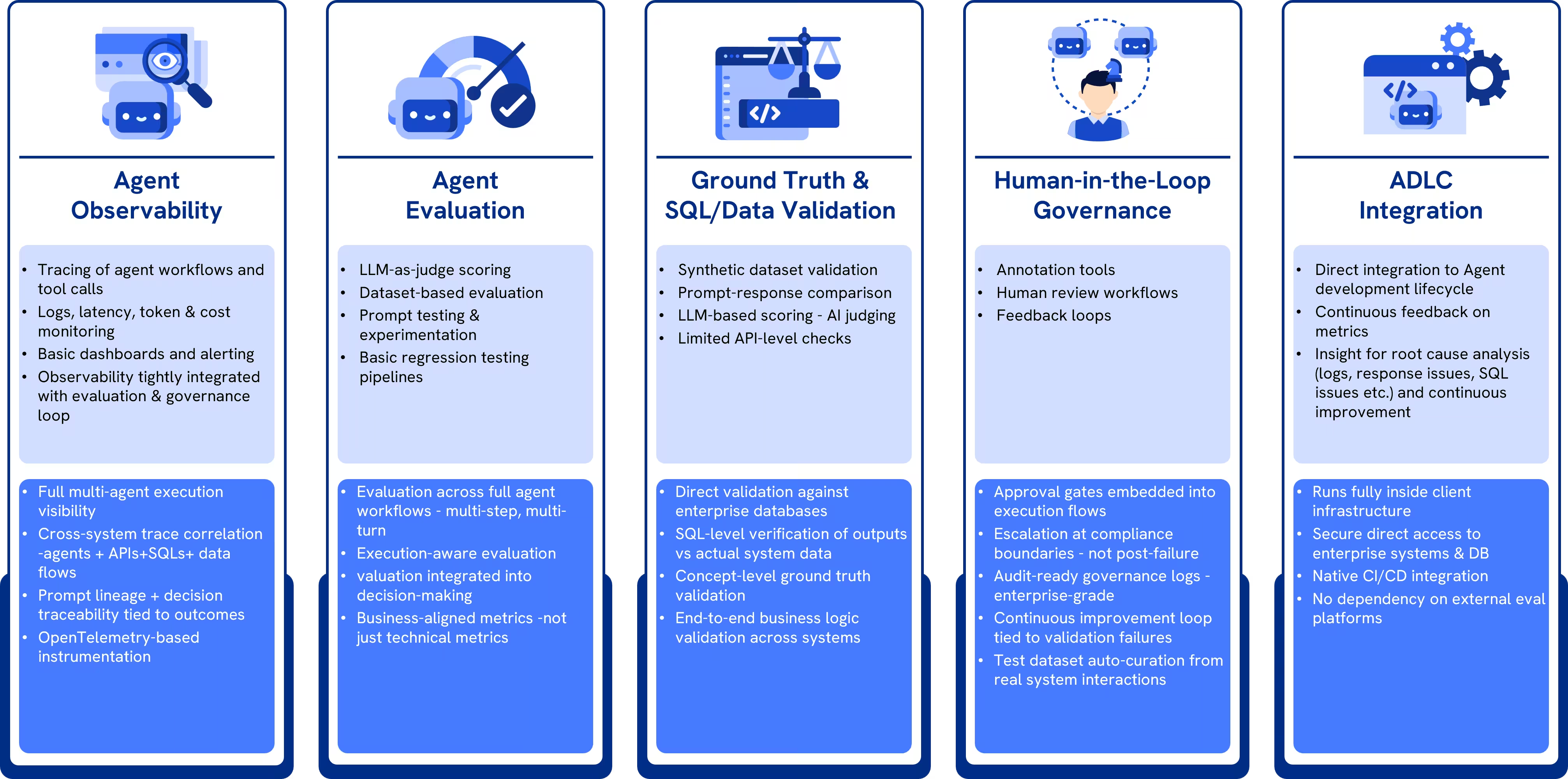

Five infrastructure capabilities become critical as organizations move from early agent deployments to enterprise-scale operations. Each one addresses a dimension of governance that current tooling leaves partially solved at best.

End-to-end operational observability: Knowing that an agent produced an output is not the same as understanding how it got there nor the various steps involved in that journey. Teams need trace-level visibility across the full interaction: every reasoning step, every tool invoked, every SQL query issued, every API or AI/ML models called and every handoff between agents. When something goes wrong in a multi-step workflow, tracing it back is rarely straightforward.

Context-aware evaluation: A reasoning agent that constructs a perfectly logical multi-step plan against an outdated data or schema, or executes a workflow based on a business rule that has recently changed, will pass every generic quality check and produce wrong outcomes that are difficult to debug, especially as the number of agents grows. Meaningful evaluation requires attaching the organization's own semantic layer, data models, business rules, and real-time ground truth to every assessment. Without that context, evaluation measures the quality of the reasoning, not the correctness of the outcome.

Human-in-the-Loop (HITL) Workflows: As agents handle higher-stakes decisions autonomously, the moments where humans must be in the loop become more important. What enterprises need is intelligent routing of those interactions to the right domain expert, for instance, a finance SME reviewing invoice agent decisions, or a CX specialist reviewing customer service escalations. The reasoning behind decisions needs to be captured, and findings fed back systematically to agent development teams.

Business KPI alignment: Boards and risk committees cannot govern what they cannot measure in their own terms. Translating agent performance into business outcomes, like resolution rates by interaction type, policy adherence rates, financial exposure from incorrect commitments, requires a governed KPI mapping layer that connects evaluation metrics to the KPIs leadership actually tracks. Without it, AI agent performance remains a technology metric, invisible to the people accountable for business outcomes.

Agent Development Lifecycle (ADLC) integration: Evaluation findings that sit in dashboards do not improve agents. The feedback loop from evaluation to the development team needs to be built into the agent development lifecycle as a first-class input. For every new agent release, structured evaluation should trigger automatically, regression detection should be automated, and structured findings delivered directly to the development team in a form they can act on.

Most organizations we work with have already assembled some combination of observability tools, evaluation scripts, and manual review processes. The gaps aren't from lack of effort. They are structural. They reach for point solutions that weren't designed to work together at the level governance requires.

Building the AgentGovernance Operating System for Scale

R-LiveMeasure provides the five infrastructure capabilities described above as an integrated, enterprise-owned system. It moves beyond the collection of agent-specific observability tools requiring custom integrations and aggregations to function together. The design philosophy reflects our experience with large-scale agent deployments: governance, at enterprise scale, is not a layer added on top of agent operations. It is the operating system within which agent operations run.

One of the major design decisions in R-LiveMeasure is the definition of the interaction record: the complete, unified trace of a single business process including every turn in the conversation, every tool call issued, every agent handoff, every API invocation, and every database call, all treated together as one unit of governance. An invoice dispute is one interaction record. A payment arrangement negotiation is one interaction record. R-LiveMeasure evaluates outcomes as complete business processes, which aligns the unit of evaluation with the unit of business accountability. When leadership asks how many invoice disputes were resolved accurately this month, R-LiveMeasure provides that answer at the business level, not as an aggregation of scores for turn-level conversations.

Each interaction record carries a versioned context bundle: the organization’s specific data schemas, semantic layer definitions, business rules, and ground truth at the time the interaction occurred. As the business evolves, evaluation evolves with it. Historical interactions remain meaningful because they are always assessed against the context that was current at the time.

The HITL layer functions as a genuine workflow engine. Interactions are routed to reviewers based on agent type, risk classification, and domain expertise. A finance process agent routes to a finance SME, a customer service agent routes to a CX specialist. Reviewer judgements are calibrated over time to ensure consistency; the reasoning behind those judgements is captured and becomes institutional knowledge that progressively improves automated evaluators.

ADLC integration ensures that evaluation findings flow directly into the agent development lifecycle. Structured evaluation means that the governance system actively shapes what gets deployed, rather than recording what was deployed after the fact.

How R-LiveMeasure Is Deployed?

R-LiveMeasure is delivered as an enterprise accelerator, a framework that organizations deploy inside their own infrastructure, configure according to their own governance policies, and own outright from the point of transition. The rationale is straightforward: a governance system that routes sensitive agent interaction data cannot be passed through a third-party platform. Such vendor dependency is structurally inconsistent with enterprise governance requirements. The data, the policies, and the audit trail belong permanently to the organization.

After transition, the organization holds the R-LiveMeasure codebase, configuration, and governance logic in its own repository. An optional ongoing engagement is available for organizations that want continued involvement as their agent portfolio evolves. Every engagement is designed to end with a client that has fully internalized the governance capability.

Build versus adopt: Organizations building equivalent governance infrastructure from scratch typically require 8 to 12 months of sustained senior engineering investment. R-LiveMeasure compresses initial deployment to 3 to 4 months by providing the hardened infrastructure layer. The organization contributes what is uniquely their own: its policies, its business rules, and its governance decisions. That is the right division of labor. It allows governance to scale along with the growth of the agent portfolio.

The Infrastructure Worth Making

Every enterprise that scaled its human workforce beyond a certain point faced the same inflection: informal management giving way to institutional infrastructure. Performance frameworks, accountability structures, feedback mechanisms, continuous development cycles. The organizations that built that infrastructure early not only managed their workforce better; they unlocked the ability to grow it further, deploy it with greater confidence, and extract more value from it year after year.

Agent portfolios are at that same inflection point now. Across every sector, the first wave of deployments has proven the case. Agents work. They deliver. The question is whether the organization has the operational backbone to govern what it is building, improve it systematically, and scale it without accumulating risk in the process.

The enterprises that navigate this well will share three characteristics: complete visibility into what every agent in their portfolio is doing, not at the response level, but at the level of business outcomes, traced back through every decision, every tool call, every human handoff; a governed connection between agent performance and the KPIs boards and business leaders actually track; and a closed loop between evaluation and agent development so that every finding makes the next generation of agents measurably better.

R-LiveMeasure is built for exactly this moment. Built for organizations that have proven the value of AI agents and are now ready for the operational backbone that allows that value to grow.

Most organizations we work with discover the gap is larger than expected. They’ve been paying attention. But the tooling they have wasn't designed for what they're now running. That's usually where we start.